The post Building Infrastructure for ClickHouse Performance appeared first on The WebScale Database Infrastructure Operations Experts.

]]>When you are building a very large Database System for analytics on ClickHouse you have to carefully build and operate infrastructure for performance and scalability. Is there any one magic wand to take care of the full-stack performance ? Unfortunately, the answer is no ! If you are not proactively monitoring and sizing the database infrastructure, you may be experiencing severe performance bottleneck or sometimes the total database outage causing serious revenue impact and all these may happen during the peak business hours or season, So where do we start planning for the infrastructure of ClickHouse operations ? As your ClickHouse database grows, the complexity of the queries also increases so we strongly advocate for investing in observability / monitoring infrastructure to troubleshoot more efficiently / proactively, We at MinervaDB use Grafana ( https://grafana.com/grafana/plugins/vertamedia-clickhouse-datasource ) to monitor ClickHouse Operations and record every performance counters from CPU, Network, Memory / RAM and Storage .This blog post is about knowing and monitoring the infrastructure component’s performance to build optimal ClickHouse operations.

Monitor for overheating CPUs

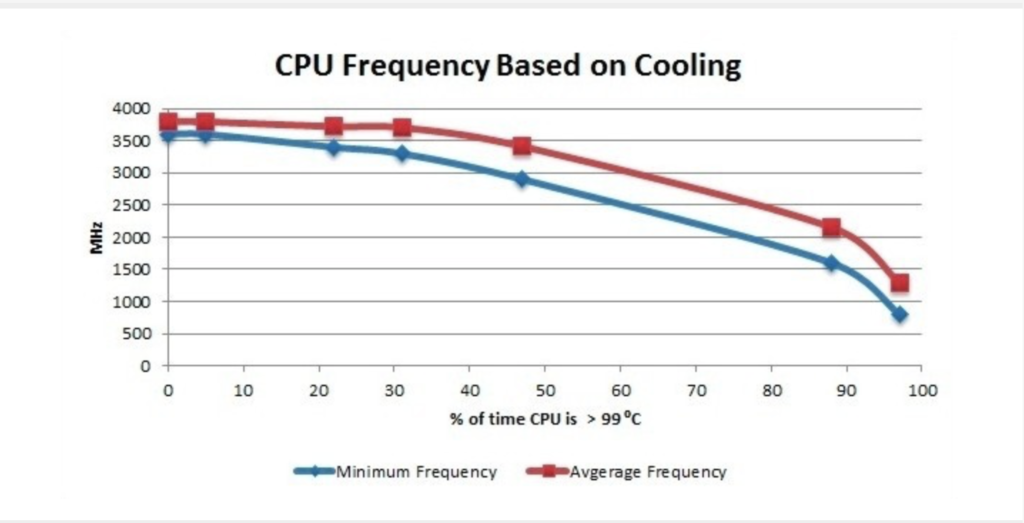

The overheating can damage processor and even motherboard, Closely monitor the systems if you are overclocking and it is exceeding 100C, please turn off the system. Most modern processors do reduce their clockspeed when they get warm to try and cool themselves, This will cause sudden degradation in the performance.

Monitor your current CPU speed:

sudo cat /sys/devices/system/cpu/cpu0/cpufreq/cpuinfo_cur_freq

You can also use turbostat to monitor the CPU load:

sudo ./turbostat --quiet --hide sysfs,IRQ,SMI,CoreTmp,PkgTmp,GFX%rc6,GFXMHz,PkgWatt,CorWatt,GFXWatt Core CPU Avg_MHz Busy% Bzy_MHz TSC_MHz CPU%c1 CPU%c3 CPU%c6 CPU%c7 - - 488 90.71 3900 3498 12.50 0.00 0.00 74.98 0 0 5 0.13 3900 3498 99.87 0.00 0.00 0.00 0 4 3897 99.99 3900 3498 0.01 1 1 0 0.00 3856 3498 0.01 0.00 0.00 99.98 1 5 0 99.00 3861 3498 0.01 2 2 1 0.02 3889 3498 0.03 0.00 0.00 99.95 2 6 0 87.81 3863 3498 0.05 3 3 0 0.01 3869 3498 0.02 0.00 0.00 99.97 3 7 0 0.00 3878 3498 0.03 Core CPU Avg_MHz Busy% Bzy_MHz TSC_MHz CPU%c1 CPU%c3 CPU%c6 CPU%c7 - - 491 82.79 3900 3498 12.42 0.00 0.00 74.99 0 0 27 0.69 3900 3498 99.31 0.00 0.00 0.00 0 4 3898 99.99 3900 3498 0.01 1 1 0 0.00 3883 3498 0.01 0.00 0.00 99.99 1 5 0 0.00 3898 3498 56.61 2 2 0 0.01 3889 3498 0.02 0.00 0.00 99.98 2 6 0 0.00 3889 3498 0.02 3 3 0 0.00 3856 3498 0.01 0.00 0.00 99.99 3 7 0 0.00 3897 3498 0.01

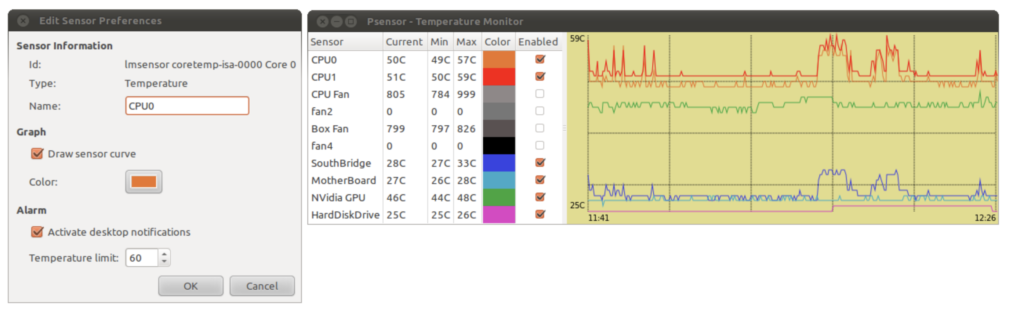

Using PSENSOR to monitor hardware temperature

psensor is a graphical hardware temperature monitor for Linux.

It can monitor:

- the temperature of the motherboard and CPU sensors (using lm-sensors).

- the temperature of the NVidia GPUs (using XNVCtrl).

- the temperature of ATI/AMD GPUs (not enabled in official distribution repositories, see the instructions for enabling its support).

- the temperature of the Hard Disk Drives (using hddtemp or libatasmart).

- the rotation speed of the fans (using lm-sensors).

- the CPU usage (since 0.6.2.10 and using Gtop2).

Since the Intel CPU thermal limit is 100 °C, we can quantify the amount of overheating by measuring the amount of time the CPU temperature was running at > 99 °C

Choosing RAID for Performance

The table below explains different RAID levels and how they impact on performance:

| RAID Level | Advantages | Disadvantages |

|---|---|---|

| RAID level 0 – Striping | RAID 0 offers great performance, both in read and write operations. There is no overhead caused by parity controls.RAID 0 offers great performance, both in read and write operations. All storage capacity is used, there is no overhead. | RAID 0 is not fault-tolerant. If one drive fails, all data in the RAID 0 array are lost. It should not be used for mission-critical systems. |

| RAID level 1 – Mirroring | RAID 1 offers excellent read speed and a write-speed that is comparable to that of a single drive. In case a drive fails, data do not have to be rebuild, they just have to be copied to the replacement drive. | Software RAID 1 solutions do not always allow a hot swap of a failed drive. That means the failed drive can only be replaced after powering down the computer it is attached to. For servers that are used simultaneously by many people, this may not be acceptable. Such systems typically use hardware controllers that do support hot swapping. The main disadvantage is that the effective storage capacity is only half of the total drive capacity because all data get written twice. |

| RAID level 5 | Read data transactions are very fast while write data transactions are somewhat slower (due to the parity that has to be calculated). If a drive fails, you still have access to all data, even while the failed drive is being replaced and the storage controller rebuilds the data on the new drive. | Technology complexity - If one of the disks in an array using 4TB disks fails and is replaced, restoring the data (the rebuild time) may take a day or longer, depending on the load on the array and the speed of the controller. If another disk goes bad during that time, data are lost forever. |

| RAID level 6 – Striping with double parity | READs are very fast, If two drives fail, you still have access to all data, even while the failed drives are being replaced. So RAID 6 is more secure than RAID 5. | Technology complexity - Rebuilding an array in which one drive failed can take a long time. Write data transactions are slower than RAID 5 due to the additional parity data that have to be calculated. In one report I read the write performance was 20% lower. |

| RAID level 10 – combining RAID 1 & RAID 0 | High Performance and Fault Tolerant - If something goes wrong, All we need to do is copying all the data from the surviving mirror to a new drive. | Highly expensive - Half of the storage capacity goes directly for mirroring. |

- Use NCQ with a long queue.

- Use CFQ scheduler for HDD.

- Enable write cache for improved WRITEs performance.

- Use noop for SSD.

- Ext4 is the most reliable.

You can read a detailed blog post about RAID here – https://minervadb.com/index.php/2019/08/04/raid-redundant-storage-for-database-reliability/

Huge Pages

What are Transparent Huge Pages and why they exist ? Operating Systems, Database Systems and several applications run in virtual memory. The Operating System manage virtual memory using pages (contiguous block of memory). Technically virtual memory is mapped to physical memory by the Operating System maintaining the page tables data structure in RAM. The address translation logic (page table walking) is implemented by the CPU’s memory management unit (MMU). The MMU also has a cache of recently used pages. This cache is called the Translation lookaside buffer (TLB).

Operating Systems manage virtual memory using pages (contiguous block of memory). Typically, the size of a memory page is 4 KB. 1 GB of memory is 256 000 pages; 128 GB is 32 768 000 pages. Obviously TLB cache can’t fit all of the pages and performance suffers from cache misses. There are two main ways to improve it. The first one is to increase TLB size, which is expensive and won’t help significantly. Another one is to increase the page size and therefore have less pages to map. Modern OSes and CPUs support large 2 MB and even 1 GB pages. Using large 2 MB pages, 128 GB of memory becomes just 64 000 pages.

Transparent Hugepage Support in Linux exist for performance. Transparent Huge Pages manages large pages automatically and transparently for applications. The benefits are pretty obvious: no changes required on application side; it reduces the number of TLB misses; page table walking becomes cheaper. The feature logically can be divided into two parts: allocation and maintenance. The THP takes the regular (“higher-order”) memory allocation path and it requires that the OS be able to find contiguous and aligned block of memory. It suffers from the same issues as the regular pages, namely fragmentation. If the OS can’t find a contiguous block of memory, it will try to compact, reclaim or page out other pages. That process is expensive and could cause latency spikes (up to seconds). This issue was addressed in the 4.6 kernel (via “defer” option), the OS falls back to a regular page if it can’t allocate a large one. The second part is maintenance. Even if an application touches just 1 byte of memory, it will consume whole 2 MB large page. This is obviously a waste of memory. So there’s a background kernel thread called “khugepaged”. It scans pages and tries to defragment and collapse them into one huge page. Despite it’s a background thread, it locks pages it works with, hence could cause latency spikes too. Another pitfall lays in large page splitting, not all parts of the OS work with large pages, e.g. swap. The OS splits large pages into regular ones for them. It could also degrade the performance and increase memory fragmentation.

Why we recommend to disable Transparent Huge Pages for ClickHouse Performance ?

Transparent Huge Pages (THP) is a Linux memory management system that reduces the overhead of Translation Lookaside Buffer (TLB) lookups on machines with large amounts of memory by using larger memory pages.

However, In our experience ClickHouse often perform poorly with THP enabled, because they tend to have sparse rather than contiguous memory access patterns. When running ClickHouse on Linux, THP should be disabled for best performance.

$ echo 'never' | sudo tee /sys/kernel/mm/transparent_hugepage/enabled

Yandex recommends perf top to monitor the time spend in the kernel for memory management.

RAM

ClickHouse performs great with the high quality investments on RAM. As the data volumes increases, caching benefits more to the frequently executed SORT / SEARCH intensive analytical queries . “Yandex recommends you not to disable overcommit”. The value cat /proc/sys/vm/overcommit_memory should be 0 or 1. Run

$ echo 0 | sudo tee /proc/sys/vm/overcommit_memory

Conclusion

The visibility to ClickHouse Ops. is very important to build optimal, scalable and highly available Data Analytics platforms, Most often what happens is we measure the hardware infrastructure when there is a performance bottleneck and the reactive approach to troubleshoot performance is really expensive. When we work with our customers, we plan and conduct regular performance audits of their ClickHouse Ops. for right sizing their infrastructure.

References:

- https://alexandrnikitin.github.io/

- https://blogs.intel.com

- https://www.kernel.org/doc/Documentation/vm/transhuge.txt

- https://clickhouse.yandex/docs/en/

The post Building Infrastructure for ClickHouse Performance appeared first on The WebScale Database Infrastructure Operations Experts.

]]>The post Database Replication from MySQL to ClickHouse for High Performance WebScale Analytics appeared first on The WebScale Database Infrastructure Operations Experts.

]]>MySQL works great for Online Transaction Processing (OLTP) systems, MySQL performance degrades with analytical queries on very large database infrastructure, I agree you can optimize MySQL query performance with InnoDB compressions but why then combine OLTP and OLAP (Online Analytics Processing Systems) when you have columnar stores which can deliver high performance analytical queries more efficiently? I have seen several companies building dedicated MySQL servers for Analytics but over the period of time they end spending more money in fine tuning MySQL for Analytics with no significant improvements, There is no point in blaming MySQL for what it is not built for, MySQL / MariaDB is any day a bad choice for columnar analytics / big data solutions. Columnar database systems are best suited for handling large quantities of data: data stored in columns typically is easier to compress, it is also easier to access on per column basis – typically you ask for some data stored in a couple of columns – an ability to retrieve just those columns instead of reading all of the rows and filter out unneeded data makes the data accessed faster. So how can you combine the best of both ? Using MySQL purely for Transaction Processing Systems and Archiving MySQL Transactional Data for Data Analytics on a columnar store like ClickHouse, This post is about archiving and replicating data from MySQL to ClickHouse, you can continue reading from here.

Recommended Reading for ClickHouse:

- Why we recommend ClickHouse over many other columnar database systems ? https://minervadb.com/index.php/2018/03/06/why-we-recommend-clickhouse-over-many-other-columnar-database-systems/

- Benchmarking ClickHouse on my MacBook Pro (Super impressive performance) – https://minervadb.com/index.php/2018/01/23/benchmarking-clickhouse-2/

- ClickHouse GitHub – https://github.com/ClickHouse

- Altinity GitHub – https://github.com/Altinity

- What you should know about RAID to improve Database Systems Performance – https://minervadb.com/index.php/2019/08/04/raid-redundant-storage-for-database-reliability/

The post Database Replication from MySQL to ClickHouse for High Performance WebScale Analytics appeared first on The WebScale Database Infrastructure Operations Experts.

]]>The post Why we recommend ClickHouse over many other columnar database systems ? appeared first on The WebScale Database Infrastructure Operations Experts.

]]>The post Why we recommend ClickHouse over many other columnar database systems ? appeared first on The WebScale Database Infrastructure Operations Experts.

]]>The post Benchmarking ClickHouse on my MacBook Pro appeared first on The WebScale Database Infrastructure Operations Experts.

]]>From their website, here is what the OnTime data covers:

Airline on-time data are reported each month to the U.S. Department of Transportation (DOT), Bureau of Transportation Statistics (BTS) by the 16 U.S. air carriers that have at least 1 percent of total domestic scheduled-service passenger revenues, plus two other carriers that report voluntarily. The data cover nonstop scheduled-service flights between points within the United States (including territories) as described in 14 CFR Part 234 of DOT’s regulations. Data are available since January 1995. The following statistics are available:

Summary Statistics – All and late flights (total number, average departure delay, average taxi-out and average scheduled departure) and late flights (total and percent of diverted and cancelled flights).

Origin Airport

Destination Airport

Origin and Destination Airport

Airline

Flight Number

Detailed Statistics – Departure and arrival statistics (scheduled departure time, actual departure time, scheduled elapse time, departure delay, wheels-off time and taxi-out time) by airport and airline; airborne time, cancellation and diversion by airport and airline.

Departures

Arrivals

Airborne Time

Cancellation

Diversion

Downloading the data

Data is actually available from October 1987 until today (they are a few months behind in entering the latest data). Their drop-down in their website ( https://www.transtats.bts.gov) however only goes back to 1995. Data comes in a .zip file for every month, so I downloaded few files. Here is the direct link to download the files:

root@CSQL:/home/shiv# wget http://www.transtats.bts.gov/Download/On_Time_On_Time_Performance_2013_5.zip URL transformed to HTTPS due to an HSTS policy --2018-01-23 18:01:08-- https://www.transtats.bts.gov/Download/On_Time_On_Time_Performance_2013_5.zip Resolving www.transtats.bts.gov (www.transtats.bts.gov)... 204.68.194.70 Connecting to www.transtats.bts.gov (www.transtats.bts.gov)|204.68.194.70|:443... connected. HTTP request sent, awaiting response... 200 OK Length: 26562728 (25M) [application/x-zip-compressed] Saving to: 'On_Time_On_Time_Performance_2013_5.zip' On_Time_On_Time_Performance_201 100%[=======================================================>] 25.33M 207KB/s in 2m 16s 2018-01-23 18:03:25 (191 KB/s) - 'On_Time_On_Time_Performance_2013_5.zip' saved [26562728/26562728]

“ontime” table structure

desc ontime DESCRIBE TABLE ontime ┌─name─────────────────┬─type───────────┬─default_type─┬─default_expression─┐ │ Year │ UInt16 │ │ │ │ Quarter │ UInt8 │ │ │ │ Month │ UInt8 │ │ │ │ DayofMonth │ UInt8 │ │ │ │ DayOfWeek │ UInt8 │ │ │ │ FlightDate │ Date │ │ │ │ UniqueCarrier │ FixedString(7) │ │ │ │ AirlineID │ Int32 │ │ │ │ Carrier │ FixedString(2) │ │ │ │ TailNum │ String │ │ │ │ FlightNum │ String │ │ │ │ OriginAirportID │ Int32 │ │ │ │ OriginAirportSeqID │ Int32 │ │ │ │ OriginCityMarketID │ Int32 │ │ │ │ Origin │ FixedString(5) │ │ │ │ OriginCityName │ String │ │ │ │ OriginState │ FixedString(2) │ │ │ │ OriginStateFips │ String │ │ │ │ OriginStateName │ String │ │ │ │ OriginWac │ Int32 │ │ │ │ DestAirportID │ Int32 │ │ │ │ DestAirportSeqID │ Int32 │ │ │ │ DestCityMarketID │ Int32 │ │ │ │ Dest │ FixedString(5) │ │ │ │ DestCityName │ String │ │ │ │ DestState │ FixedString(2) │ │ │ │ DestStateFips │ String │ │ │ │ DestStateName │ String │ │ │ │ DestWac │ Int32 │ │ │ │ CRSDepTime │ Int32 │ │ │ │ DepTime │ Int32 │ │ │ │ DepDelay │ Int32 │ │ │ │ DepDelayMinutes │ Int32 │ │ │ │ DepDel15 │ Int32 │ │ │ │ DepartureDelayGroups │ String │ │ │ │ DepTimeBlk │ String │ │ │ │ TaxiOut │ Int32 │ │ │ │ WheelsOff │ Int32 │ │ │ │ WheelsOn │ Int32 │ │ │ │ TaxiIn │ Int32 │ │ │ │ CRSArrTime │ Int32 │ │ │ │ ArrTime │ Int32 │ │ │ │ ArrDelay │ Int32 │ │ │ │ ArrDelayMinutes │ Int32 │ │ │ │ ArrDel15 │ Int32 │ │ │ │ ArrivalDelayGroups │ Int32 │ │ │ │ ArrTimeBlk │ String │ │ │ │ Cancelled │ UInt8 │ │ │ │ CancellationCode │ FixedString(1) │ │ │ │ Diverted │ UInt8 │ │ │ │ CRSElapsedTime │ Int32 │ │ │ │ ActualElapsedTime │ Int32 │ │ │ │ AirTime │ Int32 │ │ │ │ Flights │ Int32 │ │ │ │ Distance │ Int32 │ │ │ │ DistanceGroup │ UInt8 │ │ │ │ CarrierDelay │ Int32 │ │ │ │ WeatherDelay │ Int32 │ │ │ │ NASDelay │ Int32 │ │ │ │ SecurityDelay │ Int32 │ │ │ │ LateAircraftDelay │ Int32 │ │ │ │ FirstDepTime │ String │ │ │ │ TotalAddGTime │ String │ │ │ │ LongestAddGTime │ String │ │ │ │ DivAirportLandings │ String │ │ │ │ DivReachedDest │ String │ │ │ │ DivActualElapsedTime │ String │ │ │ │ DivArrDelay │ String │ │ │ │ DivDistance │ String │ │ │ │ Div1Airport │ String │ │ │ │ Div1AirportID │ Int32 │ │ │ │ Div1AirportSeqID │ Int32 │ │ │ │ Div1WheelsOn │ String │ │ │ │ Div1TotalGTime │ String │ │ │ │ Div1LongestGTime │ String │ │ │ │ Div1WheelsOff │ String │ │ │ │ Div1TailNum │ String │ │ │ │ Div2Airport │ String │ │ │ │ Div2AirportID │ Int32 │ │ │ │ Div2AirportSeqID │ Int32 │ │ │ │ Div2WheelsOn │ String │ │ │ │ Div2TotalGTime │ String │ │ │ │ Div2LongestGTime │ String │ │ │ │ Div2WheelsOff │ String │ │ │ │ Div2TailNum │ String │ │ │ │ Div3Airport │ String │ │ │ │ Div3AirportID │ Int32 │ │ │ │ Div3AirportSeqID │ Int32 │ │ │ │ Div3WheelsOn │ String │ │ │ │ Div3TotalGTime │ String │ │ │ │ Div3LongestGTime │ String │ │ │ │ Div3WheelsOff │ String │ │ │ │ Div3TailNum │ String │ │ │ │ Div4Airport │ String │ │ │ │ Div4AirportID │ Int32 │ │ │ │ Div4AirportSeqID │ Int32 │ │ │ │ Div4WheelsOn │ String │ │ │ │ Div4TotalGTime │ String │ │ │ │ Div4LongestGTime │ String │ │ │ │ Div4WheelsOff │ String │ │ │ │ Div4TailNum │ String │ │ │ │ Div5Airport │ String │ │ │ │ Div5AirportID │ Int32 │ │ │ │ Div5AirportSeqID │ Int32 │ │ │ │ Div5WheelsOn │ String │ │ │ │ Div5TotalGTime │ String │ │ │ │ Div5LongestGTime │ String │ │ │ │ Div5WheelsOff │ String │ │ │ │ Div5TailNum │ String │ │ │ └──────────────────────┴────────────────┴──────────────┴────────────────────┘ 109 rows in set. Elapsed: 0.091 sec.

Load data to ClickHouse

root@CSQL:/home/shiv# for i in On_Time_On_Time_Performance_2013_5.zip; do echo $i; unzip -cq $i '*.csv' | sed 's/\.00//g' | clickhouse-client --query="INSERT INTO ontime FORMAT CSVWithNames"; done

I have loaded 2.5 million records only for this benchmarking (doing this on my MacBook VM)

select count(1) from ontime; SELECT count(1) FROM ontime ┌─count(1)─┐ │ 2530089 │ └──────────┘

Query performance

Query 1

select avg(c1) from (select Year, Month, count(*) as c1 from ontime group by Year, Month);

SELECT avg(c1) FROM ( SELECT Year, Month, count(*) AS c1 FROM ontime GROUP BY Year, Month ) ┌──avg(c1)─┐ │ 506017.8 │ └──────────┘ 1 rows in set. Elapsed: 0.094 sec. Processed 2.53 million rows, 7.59 MB (26.86 million rows/s., 80.57 MB/s.)

Query 2

The number of flights per day from the year 1980 to 2008

SELECT DayOfWeek, count(*) AS c FROM ontime WHERE (Year >= 1980) AND (Year <= 2013) GROUP BY DayOfWeek ORDER BY c DESC ┌─DayOfWeek─┬──────c─┐ │ 3 │ 384020 │ │ 5 │ 381632 │ │ 1 │ 368063 │ │ 4 │ 365107 │ │ 2 │ 363124 │ │ 7 │ 362386 │ │ 6 │ 305757 │ └───────────┴────────┘ 7 rows in set. Elapsed: 0.035 sec. Processed 2.53 million rows, 7.59 MB (71.33 million rows/s., 213.99 MB/s.)

Query 3

The number of flights delayed by more than 10 minutes, grouped by the day of the week, for 1980-2014

SELECT DayOfWeek, count(*) AS c FROM ontime WHERE (DepDelay > 10) AND (Year >= 1980) AND (Year <= 2014) GROUP BY DayOfWeek ORDER BY c DESC ┌─DayOfWeek─┬─────c─┐ │ 5 │ 82774 │ │ 1 │ 80595 │ │ 3 │ 78867 │ │ 4 │ 78455 │ │ 2 │ 73523 │ │ 7 │ 72340 │ │ 6 │ 54453 │ └───────────┴───────┘ 7 rows in set. Elapsed: 0.098 sec. Processed 2.53 million rows, 17.71 MB (25.79 million rows/s., 180.53 MB/s.)

Query 4

The number of delays by airport for 2000-2008

SELECT Origin, count(*) AS c FROM ontime WHERE (DepDelay > 10) AND (Year >= 1980) AND (Year <= 2014) GROUP BY Origin ORDER BY c DESC LIMIT 10 ┌─Origin──┬─────c─┐ │ ATL\0\0 │ 35945 │ │ ORD\0\0 │ 32616 │ │ DFW\0\0 │ 27470 │ │ DEN\0\0 │ 21119 │ │ LAX\0\0 │ 15454 │ │ IAH\0\0 │ 14651 │ │ DTW\0\0 │ 14040 │ │ SFO\0\0 │ 14018 │ │ EWR\0\0 │ 12524 │ │ PHX\0\0 │ 11718 │ └─────────┴───────┘ 10 rows in set. Elapsed: 0.087 sec. Processed 2.53 million rows, 27.83 MB (29.07 million rows/s., 319.73 MB/s.)

Query 5

The number of delays by carrier between 1980 – 2014

SELECT Carrier, c, c2, (c * 1000) / c2 AS c3 FROM ( SELECT Carrier, count(*) AS c FROM ontime WHERE (DepDelay > 10) AND (Year >= 1980) AND (Year <= 2014) GROUP BY Carrier ) ANY INNER JOIN ( SELECT Carrier, count(*) AS c2 FROM ontime WHERE (Year >= 1980) AND (Year <= 2014) GROUP BY Carrier ) USING (Carrier) ORDER BY c3 DESC ┌─Carrier─┬─────c─┬─────c2─┬─────────────────c3─┐ │ PI │ 12334 │ 39594 │ 311.5118452290751 │ │ EA │ 10679 │ 37632 │ 283.77444727891157 │ │ TW │ 6573 │ 23761 │ 276.62977147426454 │ │ WN │ 91808 │ 388242 │ 236.47106701490307 │ │ MQ │ 33623 │ 143640 │ 234.07825118351434 │ │ EV │ 32068 │ 140110 │ 228.8773106844622 │ │ VX │ 1166 │ 5128 │ 227.37909516380654 │ │ CO │ 21934 │ 98702 │ 222.22447366821342 │ │ F9 │ 5790 │ 26656 │ 217.21188475390156 │ │ AL │ 6634 │ 31663 │ 209.51899693648738 │ │ DL │ 58111 │ 277642 │ 209.30190677203018 │ │ OH │ 6888 │ 33223 │ 207.32624988712638 │ │ UA │ 36478 │ 176851 │ 206.26403017229194 │ │ FL │ 15847 │ 76950 │ 205.93892137751786 │ │ AA │ 47797 │ 232915 │ 205.21220187622094 │ │ B6 │ 13743 │ 67330 │ 204.11406505272538 │ │ NW │ 11219 │ 55516 │ 202.08588515022697 │ │ XE │ 17577 │ 87277 │ 201.3932651213951 │ │ 9E │ 16419 │ 89637 │ 183.17212758124435 │ │ PA │ 1079 │ 6080 │ 177.4671052631579 │ │ PS │ 2306 │ 13169 │ 175.10820867188093 │ │ OO │ 33745 │ 193333 │ 174.54340438517997 │ │ YV │ 10380 │ 60881 │ 170.49654243524253 │ │ US │ 17427 │ 132937 │ 131.09217147972348 │ │ HP │ 2024 │ 15583 │ 129.88513123275365 │ │ AS │ 5824 │ 52993 │ 109.90130771988753 │ │ HA │ 1534 │ 22644 │ 67.74421480303833 │ └─────────┴───────┴────────┴────────────────────┘ 27 rows in set. Elapsed: 0.154 sec. Processed 5.06 million rows, 30.36 MB (32.95 million rows/s., 197.70 MB/s.)

Query 6

Percentage of flights delayed for more than 10 minutes, by year

SELECT Year, c1 / c2 FROM ( SELECT Year, count(*) * 1000 AS c1 FROM ontime WHERE DepDelay > 10 GROUP BY Year ) ANY INNER JOIN ( SELECT Year, count(*) AS c2 FROM ontime GROUP BY Year ) USING (Year) ORDER BY Year ASC ┌─Year─┬─────divide(c1, c2)─┐ │ 1988 │ 233.08158828241218 │ │ 2009 │ 126.58476272716568 │ │ 2010 │ 227.19108136859793 │ │ 2013 │ 217.86337903405135 │ └──────┴────────────────────┘ 4 rows in set. Elapsed: 0.074 sec. Processed 5.06 million rows, 20.24 MB (68.61 million rows/s., 274.44 MB/s.)

Conclusion

I am very impressed with performance of ClickHouse in my MacBook VM , These tests are conducted on cold cache and no tweaking is done to the ClickHouse Server for performance

The post Benchmarking ClickHouse on my MacBook Pro appeared first on The WebScale Database Infrastructure Operations Experts.

]]>The post Benchmarking ClickHouse appeared first on The WebScale Database Infrastructure Operations Experts.

]]>The post Benchmarking ClickHouse appeared first on The WebScale Database Infrastructure Operations Experts.

]]>